Edge Computing – an emerging research field

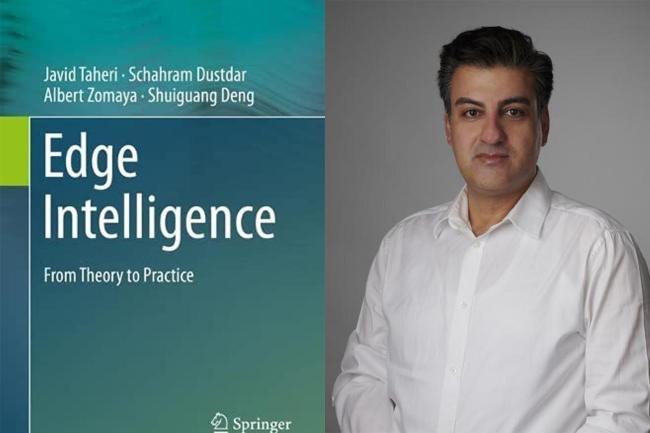

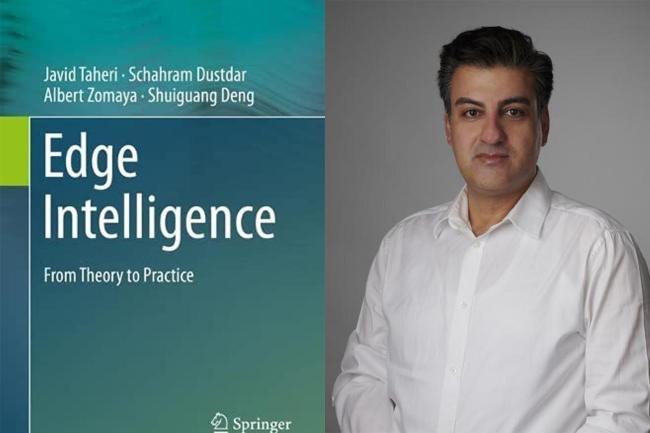

2023-05-26Edge computing is one of the drivers for future industries and a fundamental component in 5G networks, says Professor Javid Taheri. He has recently published a reference book on the subject, Edge Intelligence: From Theory to Practice. The book provides a valuable introduction to a nascent field of research.

What are the current shortcomings of cloud computing systems that Edge Computing solves?

Despite having many powerful servers, cloud computing resources are usually far from the end-users, and thus not suitable to perform tasks with stringent requirements, those that are mostly related to end-to-end latency of services and/or privacy of personal data. Edge Computing is created in direct response to such issues.

What are the risks of using Edge Computing?

Edge Computing brings computing resources closer to the source of data, and thus expands the computing surface of services. This, in turn, also expands the surface attack to such highly distributed systems and platforms. This makes them naturally vulnerable to many security threats such Distributed Denial of Service Attacks (DDoS).

How does Edge Computing tie to Artificial Intelligence and Machine Learning?

Edge Computing platforms are highly distributed systems that consist of many heterogeneous components, ranging from powerful back-end cloud computing servers, to small IoT devices. Optimizing resources (both for computing and networking) on these highly volatile platforms requires making many joint-decisions that are usually too complex to make by current optimization techniques in due time. Artificial Intelligence and Machine Learning are powerful tools that can progressively learn from working systems, and consequently optimize them on-the-fly.

Why is Edge Computing important for the future of latency research?

Low latency is an inevitable requirement when it comes to the realization of many novel services (such as those in smart buildings, factories, and cities). The latency research in Edge Computing is more complex than the traditional latency research in the networking community, because unlike the common trends in the networking community that mostly aim to optimize the transmission of data along networking links and devices, latency research in Edge Computing also includes the minimization of computing tasks that are supposed to be performed on the commuting parts of such systems.

How does Edge Computing benefits everyday experience for individuals in a society?

Besides creating better factories and industries that eventually lead to better products, Edge Computing can also enable the creation of direct services to individuals. More personalized shopping (eg, on-the-spot recommendation of products in retailers), and driving smarter vehicles (eg, online diagnosis of faults and dynamic platooning of cars) are among the most tangible examples to demonstrate how Edge Computing can improve our day-to-day life.

What is the content of your new book (Edge Intelligence: From Theory to Practice) and how does it help?

“Edge Intelligence: From Theory to Practice” is a reference book suitable for lecturing all relevant topics of Edge Computing and its ties to Artificial Intelligence and Machine Learning approaches. The book starts from basics and step-by-step advances to ways Artificial Intelligence and Machine Learning concepts can help, or benefit from Edge Computing platforms. Using practical labs, each topic is brought to earth so that students can practice their learned knowledge with real equipment and industry approved software packages.

Read more about the book “Edge Intelligence: From Theory to Practice”